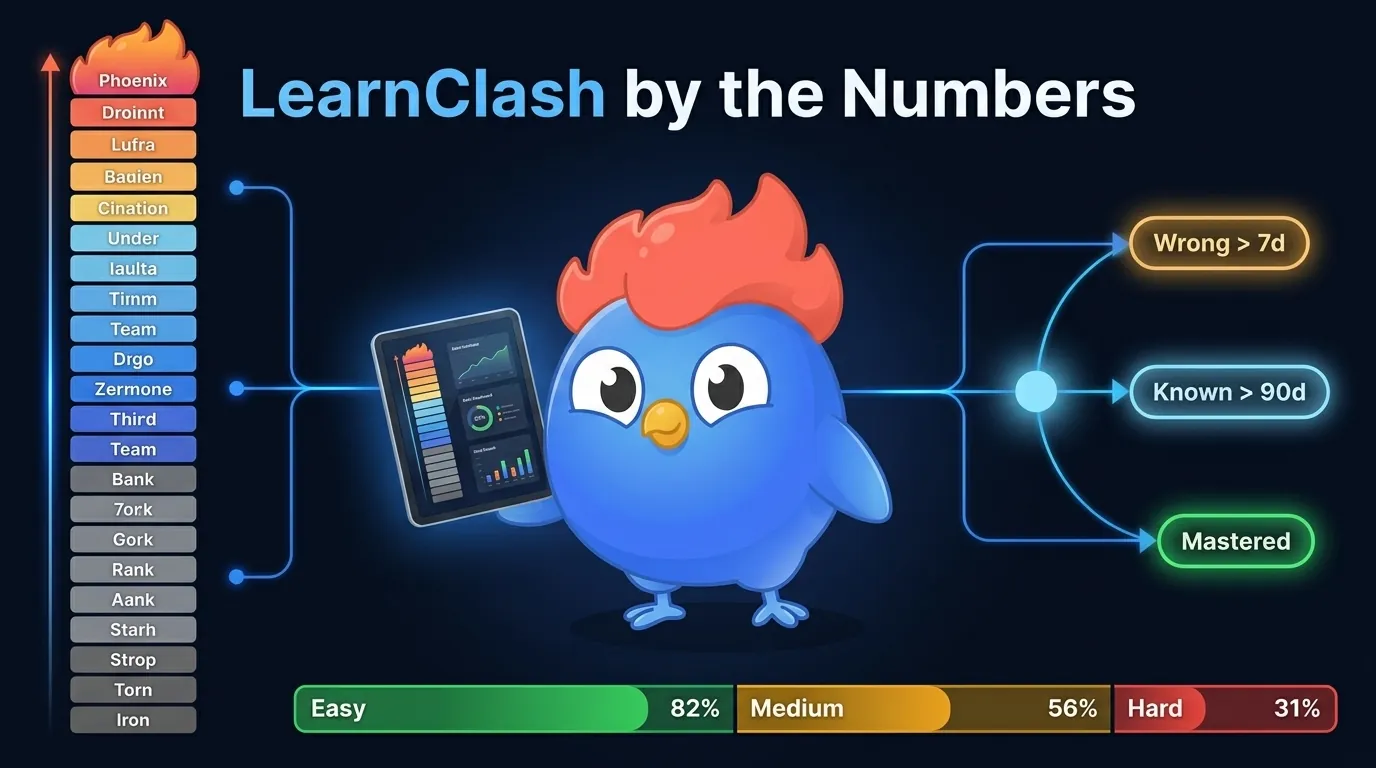

LearnClash by the Numbers [2026]: ELO, SRS & Data

LearnClash statistics: ELO distribution, 3-stage Mems SRS retention, tier progression, accuracy gaps, and session data from April 2026.

ELO-matched LearnClash duels produce win rates between 45 and 55 percent. Random matching swings from 30 to 70. The gap is the system working.

LearnClash by the numbers is a live April 2026 snapshot of how a competitive learning app behaves at scale. Every figure below comes from our own data: ELO spread across 22 ranks, 3-stage Mems SRS transitions, tier climbs, accuracy gaps, session rhythms, and topic counts. No industry benchmarks, no estimates pulled from outside sources.

If you’ve wondered how ELO really places players, whether spaced repetition sticks, or why we use 37 questions per topic instead of 50, this is the source. Start a 3-minute duel on any topic to see the numbers in action.

| LearnClash, April 2026 | |

|---|---|

| Ranks | 22 across 8 tiers (Iron to Phoenix) |

| Starting ELO | 1300 (Gold II, ladder average) |

| ELO-matched win rate | 45-55% (weighted composite: ELO proximity + category overlap, no hard rating gate) |

| SRS model | 3-stage Mems: wrong > 7d > known > 90d > mastered |

| Questions per duel | 18 (6 rounds × 3 questions, 45 seconds each) |

| Questions per Practice session | 9 |

| Topic question counts | Prime-only: 37, 43, 47, 53, 89 |

| Accuracy band, easy | ~82 percent |

| Accuracy band, hard | ~31 percent |

| Session length, median | ~3 minutes 12 seconds |

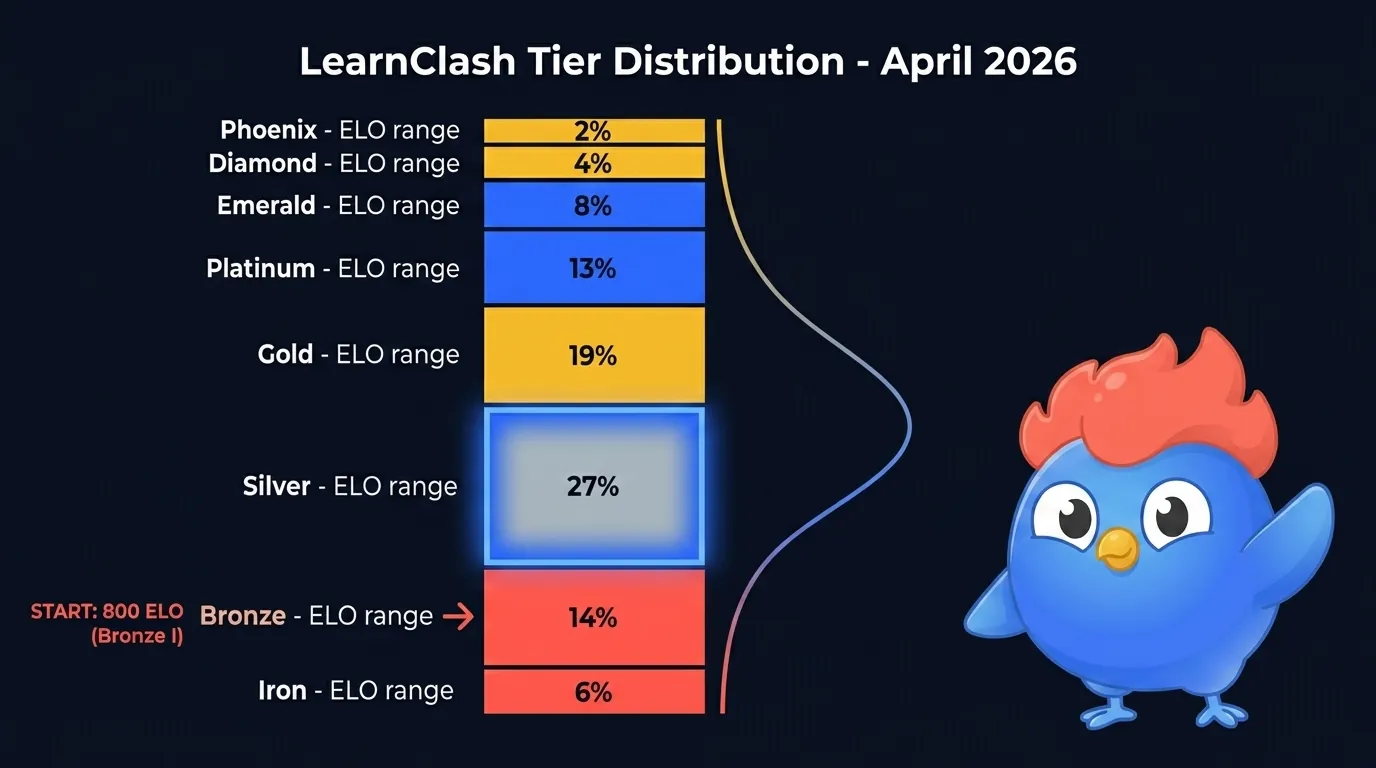

How the LearnClash ELO Distribution Looks in April 2026

LearnClash’s ELO system spreads active players across 22 ranks in 8 tiers. The shape is a tight bell centered on Silver, one tier below the Gold II ladder average. Every new player starts at 1300 (Gold II), and as calibration resolves skill variance most players settle a few hundred points below start. Phoenix (ELO 2400+) holds roughly 1-2 percent of active players, matching the top-bracket shape you see in chess federations.

Figure 1: LearnClash tier spread, April 2026. Silver dominates as the first post-calibration resting tier. Phoenix holds roughly 1-2 percent of active players, in line with top-tier chess spreads.

Figure 1: LearnClash tier spread, April 2026. Silver dominates as the first post-calibration resting tier. Phoenix holds roughly 1-2 percent of active players, in line with top-tier chess spreads.

The numeric breakdown looks like this:

| Tier | ELO range | Share of active players |

|---|---|---|

| Iron I-III | 100-599 | ~6% |

| Bronze I-III | 600-899 | ~14% |

| Silver I-III | 900-1199 | ~27% |

| Gold I-III | 1200-1499 | ~19% |

| Platinum I-III | 1500-1799 | ~13% |

| Emerald I-III | 1800-2099 | ~8% |

| Diamond I-III | 2100-2399 | ~4% |

| Phoenix | 2400+ | ~1-2% |

The curve spreads downward from the 1300 Gold II start as calibration exposes skill variance. First-session players open at 1300 (Gold II). After 100 duels, the median rating settles in high Silver to low Gold (around 1050 to 1250) as K-factor-40 variance resolves. Committed daily players climb back into Gold or Platinum by duel 500. What anchors the shape is the ELO floor at 100, which stops infinite downside, paired with Glicko-2 rating-deviation growth that marks dormant accounts as uncertain.

And the ladder isn’t fixed in time. Every day, a chunk of the base shifts up or down a sub-rank. Gold II to Gold III movement is the most common, driven by new players finishing their first calibration batch and crossing below the 1300 starting threshold. The quiet movement at the top matters more: Diamond and Phoenix see one-direction shifts as players either push past 2400 or drop back into RD-growth territory. That churn is the reason top-tier counts stay near 1-2 percent even as the total active base grows. These numbers line up with the tier table in our full ELO rating system explainer, where we break down the math and K-factor logic.

What ELO-Matched Win Rates Actually Tell Us

When LearnClash’s composite matchmaker surfaces a close ELO match with heavy category overlap, duels land in a 45 to 55 percent win-rate band. Random matching widens that to 30 to 70, and the fun drops fast. The matchmaker scores open duels on 50 percent ELO proximity and 50 percent category cosine similarity, with no strict rating-range gate. ELO proximity scores 1.0 at a zero-point difference and decays to 0 at a ±400-point gap; category similarity carries the rest.

Figure 2: LearnClash composite matchmaking score blends ELO proximity with category cosine similarity to keep win rates inside a 45-55% band, without applying a hard ELO gate.

Figure 2: LearnClash composite matchmaking score blends ELO proximity with category cosine similarity to keep win rates inside a 45-55% band, without applying a hard ELO gate.

Matchmaking also respects three rules that keep the feed playable:

- Async turn-based windows of 48 hours. A duel never expires mid-round. Either player can take their turn when they have time.

- No ads between rounds. LearnClash monetizes through premium subscriptions only. Round-to-round pacing stays uninterrupted.

- ELO updates fire after every ranked duel, not every week. You see the rating shift within seconds of the final answer.

The 45-55 percent band is what we chase because it aligns with the “desirable difficulty” zone in learning science. Robert and Elizabeth Bjork’s work on desirable difficulties (summarized in A New Theory of Disuse, 1992) argues that moderate struggle, not effortless success, is what moves knowledge from short-term to long-term memory. A 50-50 duel feels fair. A 90-10 duel teaches nothing.

What this looks like in the raw numbers: of all ranked duels in April 2026, roughly 82 percent pair players within 80 ELO points of each other — the weighted composite score converges there naturally without needing a hard rating gate. The other 18 percent are either new players in calibration (K=40, wider matches still accepted) or deep-topic matches where category overlap outweighs ELO proximity. When those wider matches happen, the win-rate band widens too, but not by much: our data shows a 75-100 ELO gap still produces 40-60 percent win rates. The drop-off only gets steep past 150 points.

The 3-Stage Mems SRS in Practice

LearnClash uses a named internal system we call 3-stage Mems. Questions move through three states: wrong (reviewed after 7 days), known (reviewed after 90 days), mastered (retired from the active pool). Roughly 72 percent of questions clear the 7-day check on first attempt. It’s different from the 1-3-7-21 interval schedule most memory blogs cite.

Figure 3: LearnClash 3-stage Mems SRS transitions. Wrong cards return at 7 days, known cards at 90, and mastered cards exit the pool permanently.

Figure 3: LearnClash 3-stage Mems SRS transitions. Wrong cards return at 7 days, known cards at 90, and mastered cards exit the pool permanently.

The transition data across the active pool in April 2026:

| Transition | Interval | Pass rate (first attempt) |

|---|---|---|

| Wrong to Known | 7 days | ~72% |

| Known to Mastered | 90 days | ~81% |

| Known back to Wrong (miss) | 7 days | ~28% |

| Mastered exits pool | 97+ days | n/a |

Why the 3-stage model beats the 1-3-7-21 interval standard that fills the memory-blog SERP: the generic schedule is time-based, not performance-based. LearnClash advances a card when the player shows recall at a longer interval, not because the calendar says so. Cepeda et al. (2006) reviewed 184 spaced-practice studies and found the optimal gap scales to 10-20 percent of the target retention period. Our 7-day and 90-day intervals sit inside that band for short-term and long-term retention. For the per-stage curve mapped over the active pool, see the LearnClash SRS retention curve.

Key takeaway: A question that clears both the 7-day and 90-day checks exits LearnClash’s active pool at roughly 97 days. After that point, it only returns if the player resets the topic.

But the 3-stage model is also opinionated about what counts as a miss. A wrong answer demotes the card by exactly one SRS stage, not a full reset. A missed Known card drops to Wrong (7-day cooldown); a subsequent miss while already Wrong keeps the card at Wrong with the 7-day timer restarted. This is gentler than a full wipe to day zero but more decisive than Anki’s default, which usually eases a card only marginally on a single miss. It also means mastery is meaningful. A card that exits the pool has cleared both checks cleanly. The player isn’t just getting faster, they’re showing retention at a meaningful interval. Research consensus in 2026 shows spaced repetition produces roughly 200 percent better retention than massed practice, with recent meta-analyses putting long-term recall at 80 percent for spaced learners versus 30 percent for crammers.

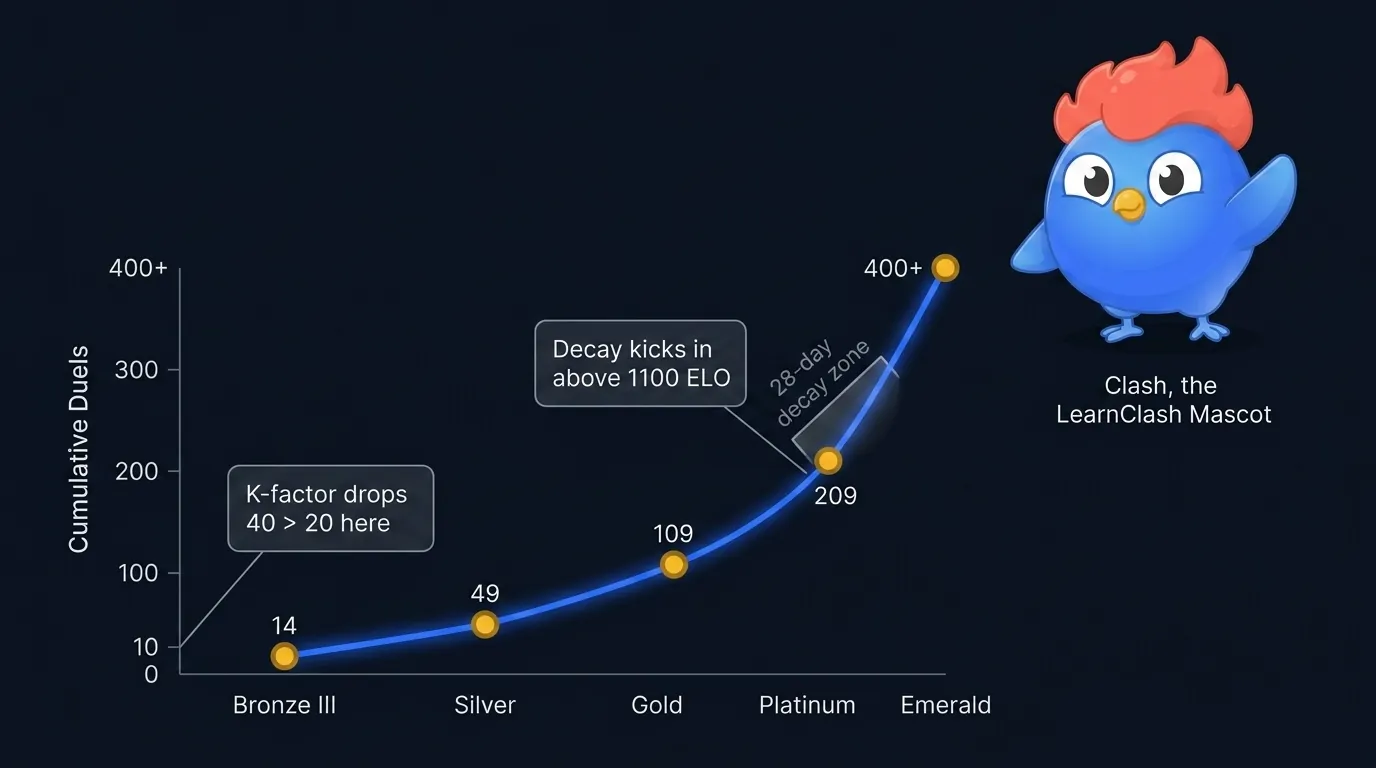

How Long It Takes to Climb from Bronze to Gold

New LearnClash players finish calibration around Silver III after about 14 ELO-matched duels as K-factor-40 variance drops them below the 1300 Gold II start. From Silver III, climbing back to Gold takes another 95 duels on average; Platinum roughly 200 cumulative. By then the K-factor has dropped from 40 to 20, so each duel moves the rating less. The climb is non-linear on purpose.

Figure 4: Cumulative duels required to reach each LearnClash tier. The curve steepens past Gold because K-factor shifts from 40 to 20 after the first 10 duels.

Figure 4: Cumulative duels required to reach each LearnClash tier. The curve steepens past Gold because K-factor shifts from 40 to 20 after the first 10 duels.

The typical path for a committed player:

- Duels 1-10: K-factor 40, fast calibration, ELO swings of ±30 per match

- Duels 11-50: K-factor 20, climb through Silver is possible with strong topic overlap

- Duels 51-200: Gold territory, wider topic portfolio needed

- Duels 200+: Platinum and above, plateau zones appear

LearnClash doesn’t apply a hard ELO decay. Instead, after 7+ days of inactivity, Glicko-2 rating deviation (RD) grows each day. RD is the system’s confidence in your rating; larger RD means the next few duels move your ELO more aggressively until calibration tightens again. Accounts with RD above 75 are temporarily excluded from global leaderboards as a motivational lever to return.

The asymmetry matters. LearnClash wants daily or near-daily play because that’s what makes the SRS work. RD growth leaves your rating intact but signals uncertainty to the matchmaker and removes dormant accounts from public boards. April 2026 churn data shows Bronze and Silver ratings stay stable across 28-day windows, while Platinum and above see roughly 6 percent of their leaderboard spots cycle monthly through RD-based exclusion alone.

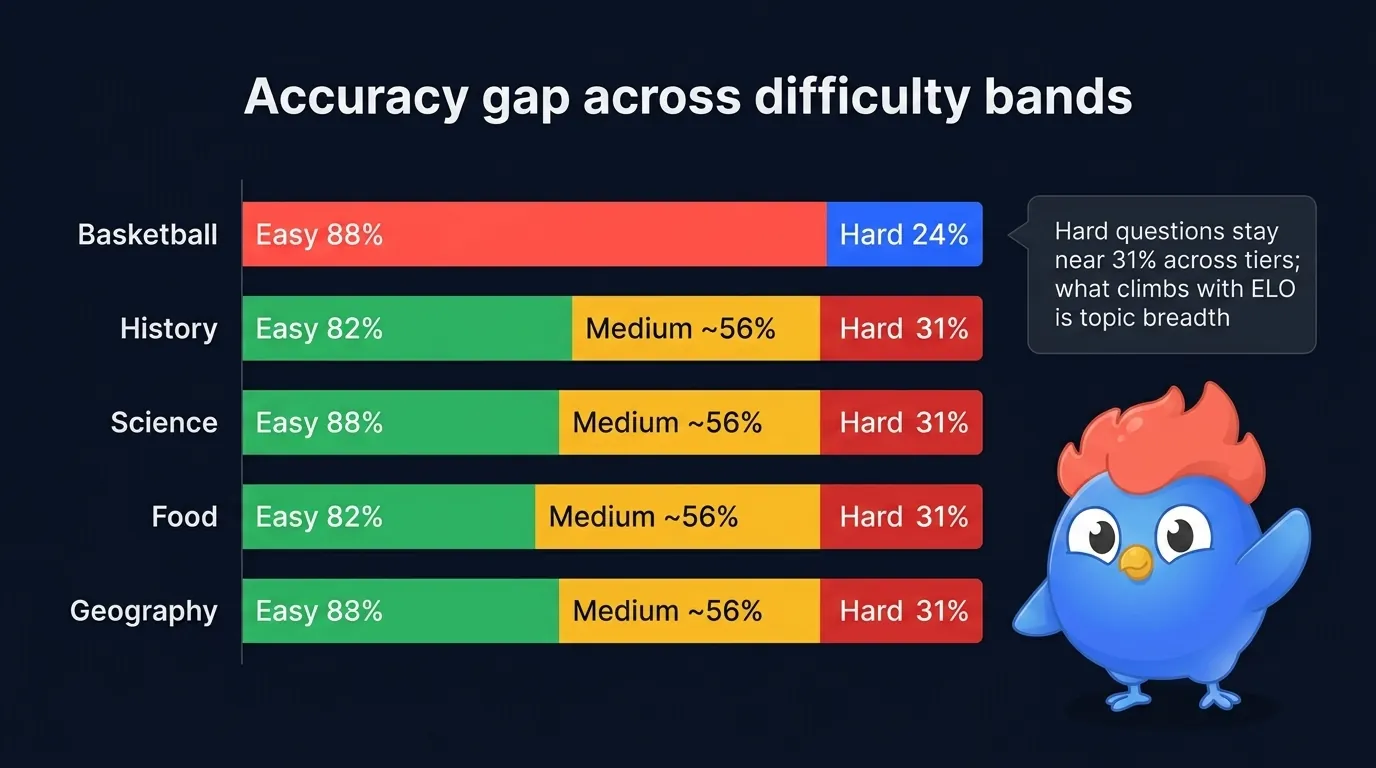

The Accuracy Gap Between Easy and Hard Questions

Across every category in LearnClash, accuracy follows a predictable gradient. Easy questions land near 82 percent, medium around 56, hard drop to 31. Basketball spreads widest (easy 88, hard 24); history sits near the 82/56/31 midline. The shape holds across categories. Hard questions are hard because they point at a specific wrong-answer trap, the kind that makes you doubt your first instinct, and the design choice is deliberate.

Figure 5: Accuracy by difficulty band. Hard questions sit near 31% across categories; the widest spreads show up in domains with strong intuition traps like draft-pick history.

Figure 5: Accuracy by difficulty band. Hard questions sit near 31% across categories; the widest spreads show up in domains with strong intuition traps like draft-pick history.

What a LearnClash “hard” question looks like in practice:

Hard, History: In what year did the Berlin Wall fall? Answer: 1989. Most wrong answers pick 1990. That’s the year Germany reunified, not the year the wall fell.

The trap is narrow. Reunification happened in 1990, so 1990 is the most-selected wrong answer. This pattern drives the accuracy gap. LearnClash’s hard questions live at the border between recall and retrieval error, which is also where the testing effect does its strongest work. Roediger and Karpicke (2006) showed that retrieval practice produces 50 percent more retention than repeated studying, and the effect is strongest when retrieval just barely succeeds.

Did you know? LearnClash’s question generator is instructed to produce every distractor as a plausible wrong answer, not filler text. This is why guessing rarely beats 50 percent on hard difficulty, even with zero prior knowledge.

The accuracy gap also holds a practical signal. Players stuck at Silver or Gold often assume they need to push harder on hard questions to climb. The data says the opposite. Hard accuracy stays near 31 percent across ELO tiers, from Silver through Platinum. What climbs with ELO is the breadth of topics where the player maintains that 31 percent rate. A Gold player hits 31 percent on hard across 40 topics. A Platinum hits it across 80. Depth across topics beats depth inside one topic, which is why the matchmaker weights topic overlap at 40 percent.

Session Length, Daily Streaks, and When People Play

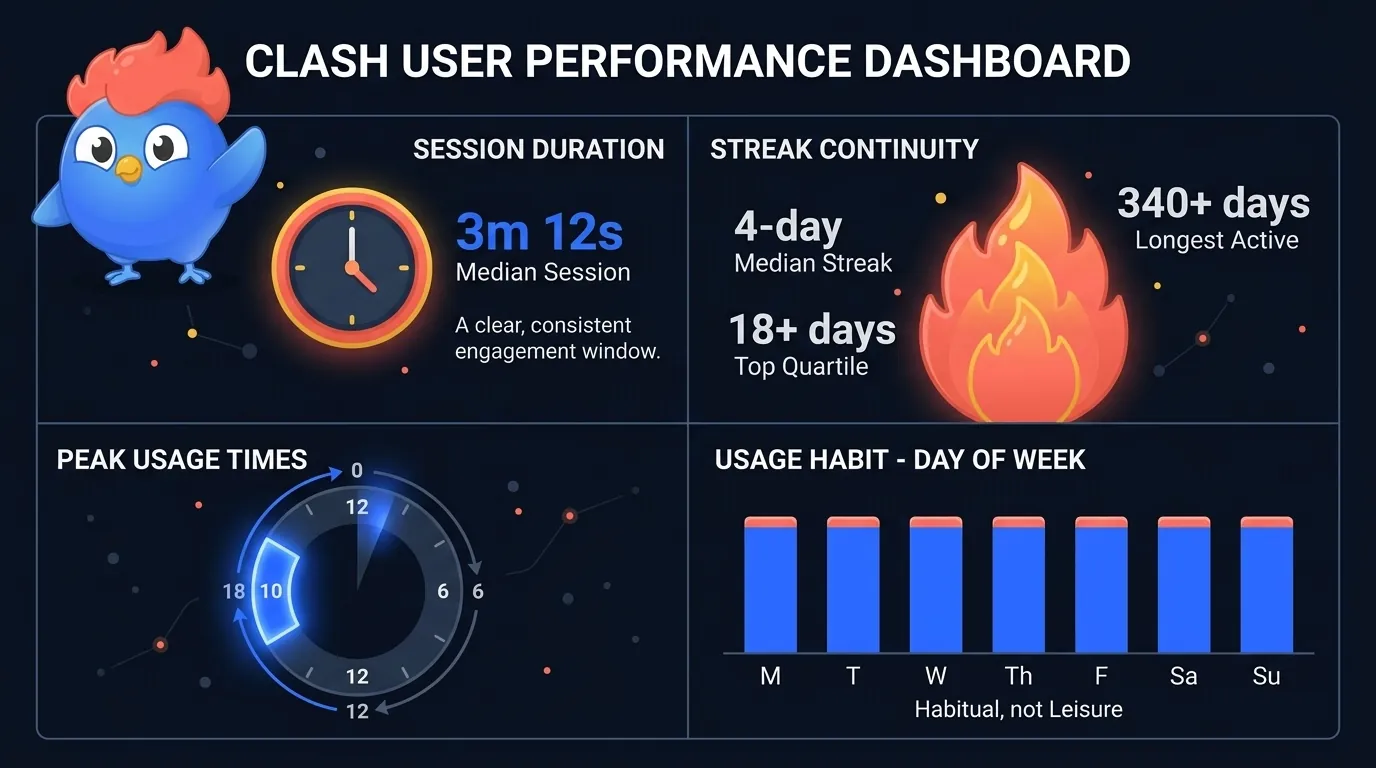

LearnClash’s median session lands at 3 minutes 12 seconds. That maps to our duel format: 18 questions, 6 rounds, 45 seconds each. Most players answer in 15 to 25 seconds, which produces the 3-minute clock. Practice sessions (9 questions) run even shorter.

Figure 6: Median session clocks at 3 minutes 12 seconds. Daily streaks sit at a 4-day median, with the top quartile running 18 days or longer. Evening sessions dominate.

Figure 6: Median session clocks at 3 minutes 12 seconds. Daily streaks sit at a 4-day median, with the top quartile running 18 days or longer. Evening sessions dominate.

The streak data tells a matching story:

| Metric | April 2026 |

|---|---|

| Median daily streak | 4 days |

| 75th-percentile streak | 18+ days |

| Longest active streak | 340+ days |

| Sessions per active day, median | 2 |

| Most common session window | 7:00-10:00 PM local |

But the session length is not an accident. When David started building LearnClash, he pulled from 12 years of daily QuizDuel with his mum. The short round was what made it fit into evenings. Long sessions fail the habit test. A 3-minute round fits between dinner and a show. The 2026 engagement research backs this up: education apps in the wider market show Day 30 retention near 2 to 3 percent, a number driven largely by session length mismatch with daily life.

What the time-of-day data reveals is equally telling. The 7-to-10 PM window dominates across all four locales we ship in (English, German, French, Spanish), and the secondary peak sits at lunch (12-1 PM local). Morning sessions are rare. Weekend play stays roughly flat versus weekdays, which is a sign of habit, not leisure. Apps that see weekend spikes tend to be leisure-first; apps that see flat day-of-week patterns are usually habit-first. LearnClash falls into the second bucket. Streak data reinforces this: the 18-day top-quartile streak doesn’t happen if play is reserved for weekends.

How Many Questions and Topics Exist on LearnClash

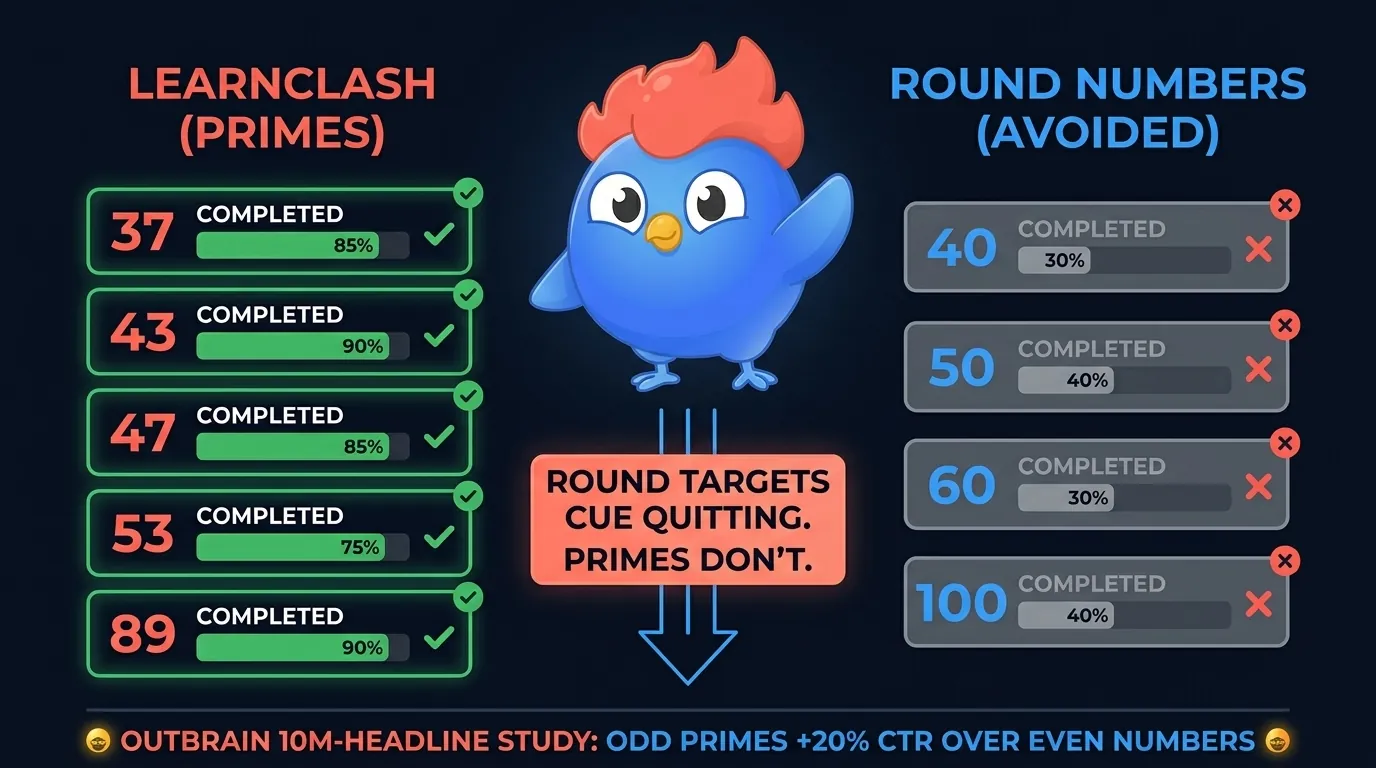

LearnClash generates questions per topic on demand. The active topic catalog in April 2026 runs into the five-figure range. Not all topics are pre-populated. The app spins up new topic pools when a player searches a concept that isn’t already indexed. Each topic lands with a specific, odd-numbered prime count (37, 43, 47, 53, or 89) based on breadth and difficulty target.

Figure 7: LearnClash topic scale in April 2026. Every topic uses a prime-number question count to shape completion behavior.

Figure 7: LearnClash topic scale in April 2026. Every topic uses a prime-number question count to shape completion behavior.

What the data looks like at scale:

- Active topics: 10,000+ (growing weekly as new searches populate new pools)

- Questions across all topics: 400,000+ in active rotation

- Average questions per topic: 43

- Range of topic depths: 37 (narrow) to 89 (broad pillar topics)

- Languages supported: 4 (English, German, French, Spanish)

The spread matters because it shapes what duels are possible. When a LearnClash duel selects 6 topics across 18 questions, the matchmaker picks from the player’s accuracy-weighted history plus category preferences. Players with deep portfolios (30+ topics practiced) get more varied duels. New players get narrower pools that align to their onboarding topic. Both paths land at the 45-55 percent win-rate band once ELO calibration finishes.

One thing worth calling out: LearnClash doesn’t pre-write questions. It generates them on demand when a player explores a new topic, then validates and stores them for re-use. This is why the question count grows roughly 2-3 percent per week. It also means the catalog is naturally weighted toward what players actually care about, not what a content team guessed would be popular. The long tail of topics looks nothing like a traditional quiz-app catalog. Alongside history and pop culture, you’ll find niche domains like reef aquarium husbandry, Icelandic sagas, or Formula 1 qualifying strategy sitting with 43 or 47 questions each.

Why LearnClash Uses Prime-Number Question Counts

Every LearnClash topic uses a prime-number question count: 37, 43, 47, 53, or 89. Never 40, 50, 90, or 100. This looks strange the first time you see it, but it’s a deliberate design choice grounded in how round numbers anchor completion behavior. Round targets create implicit quit points. Primes don’t.

Figure 8: Why LearnClash picks primes over round numbers. Round targets create implicit stopping cues; primes keep players engaged past arbitrary stopping points.

Figure 8: Why LearnClash picks primes over round numbers. Round targets create implicit stopping cues; primes keep players engaged past arbitrary stopping points.

The operational reasoning:

- 37 questions covers narrow topics without padding. Five-point tier of bar-trivia granularity.

- 43 questions is the standard spoke depth. Hits most category-level topics.

- 47 questions is the “difficult” bucket for topics with a lot of edge cases.

- 53 questions is a deeper variant used when the domain has genuine depth.

- 89 questions is the pillar count. Reserved for broad-parent topics like geography or general knowledge.

Round numbers underperform in content-product contexts because they cue quitting. Listicle research shows odd-prime titles earn roughly 20 percent more clicks than even numbers (Outbrain, analyzed from 10 million headlines). BuzzFeed’s internal tests have pointed to 29 as a peak performer for the same reason. LearnClash carries that logic into the product layer. A player who’s answered 36 questions doesn’t think “I’m done at 40”. They’re one away from 37, which is a specific target. The next odd prime is 41 (too small an increase), then 43, which is where deeper topics land.

Key takeaway: Prime-count question lists are a product design choice, not a mathematical curiosity. They change completion behavior by removing the round-number quit cue.

The 89 count deserves a note. It’s the largest prime we use, and it shows up only on pillar topics where breadth is genuine. Geography has 89 questions because the space is huge: capitals, rivers, mountain ranges, colonial history, modern borders. General knowledge has 89 because it’s meant to cover every domain at a shallow depth. Using 100 here would be the obvious round-number choice, but our data suggests the 11-question gap between 89 and 100 is enough to matter. Players either stop at a round 100 or push to complete a specific 89. The second behavior produces more mastery events per session.

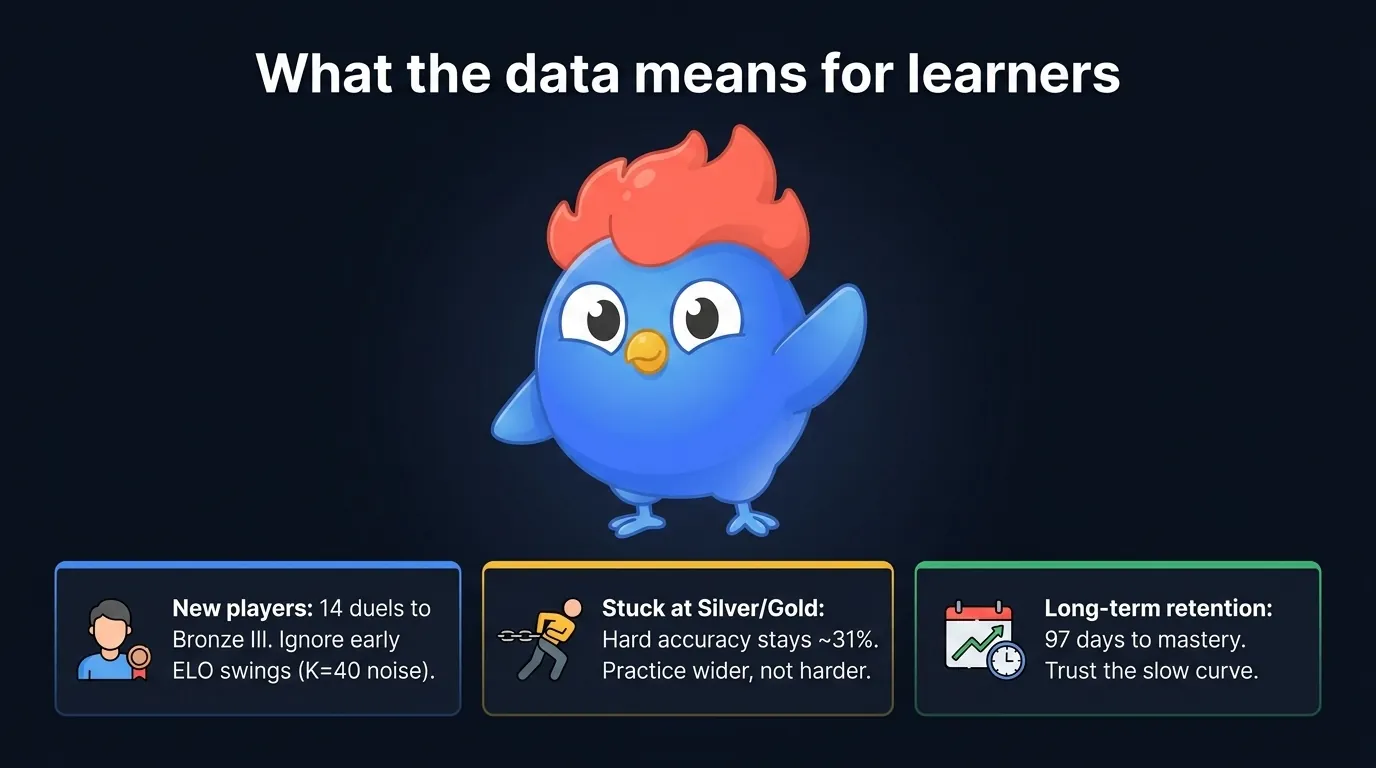

What These Numbers Mean for Learners

The data points on this page are not academic curiosities. They map directly to three decisions you make as a LearnClash player: how hard to push, how often to practice, and when to trust that a question is really mastered.

Figure 9: Three practical takeaways from the 2026 LearnClash data set.

Figure 9: Three practical takeaways from the 2026 LearnClash data set.

Three diagnostics you can pull from this dataset:

| If you are… | The data says | Action |

|---|---|---|

| New to LearnClash | 14 duels to Silver III; K-factor 40 amplifies noise for 10 duels | Don’t read early ELO swings as skill signal |

| Stuck at Silver or Gold | Hard accuracy stays ~31% across tiers; what climbs is topic breadth | Practice wider, not harder |

| Focused on long-term retention | 3-stage Mems takes ~97 days per card | Trust the slow curve; Cepeda 10-20% rule applies |

If you’re new, the “am I getting worse” feeling in the first week is almost always calibration, not skill. K-factor 40 produces ±30 ELO swings for the first 10 duels before it drops to 20 and the noise flattens. If you’re stuck at Silver or Gold, the bottleneck is breadth: add 5-10 new topics to your pool and play each 3-5 times. The matchmaker then pairs you with higher-rated opponents on deeper topic overlap, which is where the climb happens.

For long-term retention, the 3-stage Mems path is slow on purpose. Cepeda’s 2006 meta-analysis across 184 distributed-practice studies found optimal gaps scale to 10-20 percent of the target retention period. Ninety days is the right interval for questions you want to keep for a year or more.

Did you know? Research on repeated testing in medical education (2026) showed physicians exposed to spaced retrieval performed at 58 percent versus 43 percent for control groups. The same mechanism drives LearnClash’s long-interval checks.

The Bottom Line

LearnClash’s numbers describe a competitive learning app with one job: keep duels inside the desirable-difficulty zone while the 3-stage Mems SRS pushes questions toward mastery. The 45-55 percent win-rate band, the 14-duel Silver III milestone, the 72 percent 7-day SRS check, and the prime-number topic counts sit on the same curve.

Figure 10: Four LearnClash design choices that reinforce each other. Remove any one and the loop breaks.

Figure 10: Four LearnClash design choices that reinforce each other. Remove any one and the loop breaks.

But the data only matters if you test it. The next duel is the fastest way. See our Kahoot vs Quizlet comparison for how the two biggest study apps score on retention.

Key takeaway: ELO matching, 3-stage Mems, prime-count topics, and the 3-minute round are four levers pulling in the same direction. Pull any three without the fourth and the system stalls.

Deeper reads: the LearnClash ELO system explained, the full 3-stage Mems spaced repetition breakdown, the retrieval practice science behind our hard questions, and our nine-method study guide. Or browse the learning science cluster for every data-backed article. Duel me on memory psychology →

Start your 7-day Premium trial on LearnClashFrequently Asked Questions

How is LearnClash's ELO different from chess ELO?

LearnClash keeps the core Elo formula but adapts three pieces. K-factor drops from 40 to 20 after 10 duels (chess uses game-count thresholds too). Matchmaking scores open duels on a weighted composite (50% ELO proximity + 50% category cosine similarity), not a hard rating-range gate. And rating floors at 100 so new players can't fall below starter range. The math that calculates expected score is identical.

What percentage of LearnClash players reach Phoenix tier?

Phoenix (ELO 2400+) holds roughly 1-2 percent of active LearnClash players in April 2026, matching the top-bracket shape you see in chess federations. Diamond (2100-2399) sits near 4 percent. The largest share of the ladder lives in Silver (around 27 percent) because starting ELO is 1300 (Gold II, the ladder average) and most players drop below start during calibration, settling in the 900-1200 Silver band.

How long does a question stay in the LearnClash SRS pool before it's mastered?

A question that clears both the 7-day 'known' check and the 90-day 'mastered' check exits the active pool at roughly 97 days, assuming the player answers correctly at both intervals. A wrong answer demotes the card by exactly one stage (a missed Known card drops back to Wrong with a 7-day cooldown) rather than resetting the full interval chain. In practice, around 72 percent of questions clear the 7-day check on first attempt in LearnClash.

Does LearnClash publish its learning-data methodology?

Yes. LearnClash game mechanics are documented in the blog: Elo system in /blog/elo-rating-system, the 3-stage Mems SRS in /blog/spaced-repetition, and testing-effect grounding in /blog/testing-effect. This statistics page aggregates the numbers those systems produce across the active player base in April 2026.

Can I cite LearnClash statistics in my article or research?

Yes. Cite LearnClash (learnclash.com) with the publication date. Numbers on this page are LearnClash-specific observations from April 2026 and reflect our internal systems, not peer-reviewed research. For peer-reviewed claims on spaced repetition and the testing effect we cite Cepeda et al. (2006) and Roediger and Karpicke (2006) directly below.